I am currently marking coursework where students were allowed to use generative AI, but they have to detail how they used it and include screenshots. And this reminded me of a paper that I read some time ago, entitled “Competence Penalty Is a Barrier to the Adoption of New Technology“.

This paper reports a study by Phyliss Jia Gai, Jiayi Hot and Yanping Tu, where they asked engineers to evaluate pieces of code. The twist in this study was that, even though the pieces of code were identical, the researchers:

1) told some assessors that the code had been written by a male and others that it had been written by a female; and

2) sometimes they told assessors that the code had been written with AI assistance, and sometimes without.

Across the board, assessors rated engineers that had used AI to assist with the work as less competent than those that had not used it, even though the underlying code was rated as having the same quality. That is, disclosing AI use did not undermine perceptions of the output, but it undermined perceptions of the professional who used it. More significantly, this competence penalty was nearly twice as large for female engineers (a 13% drop) than for male engineers (a 6% drop).

To be fair, the penalty was not purely due to AI: when evaluating the code, assessors rated the work labelled as written by female engineers as less competent than the same work labelled as written by their male counterparts. Even more striking, the harshest penalties came from male reviewers who had not adopted the AI tool themselves. So, in effect, the AI penalty amplified pre-existing biases against the technical competency of female professionals, shaped by workplace hierarchies and harmful stereotypes.

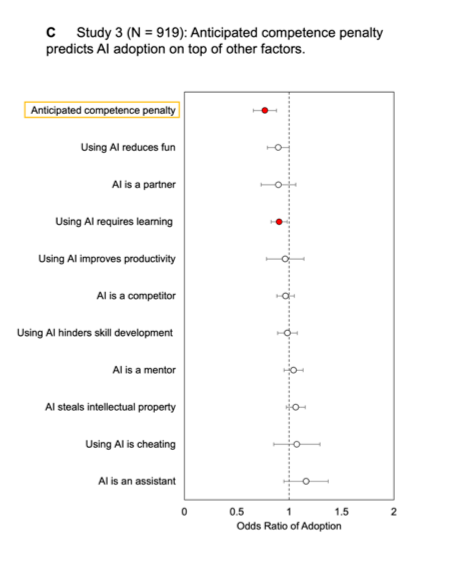

This study is really important to understand the AI Adoption gender gap. Namely, this study shows that, for women in professional environments, lack of engagement with generative AI may not be solely due to lack of ambition or initiative. Rather, it may be because the perceived reputational cost outweighs the potential productivity gains.

This study’s findings shift how we think about the problem. If using AI can harm a woman’s professional reputation, then “getting more women to use AI” is not a solution, but rather an invitation to take on risk.

So, on this (day after) International Women’s Day, I want to encourage you to reconsider how we frame the gender gap in AI adoption. If using AI can harm women’s professional reputation, then simply encouraging women to adopt these tools is not a solution. The real challenge may be confronting the workplace biases that make using them risky in the first place.