The report “Generative Language Models and Automated Influence Operations: Emerging Threats and Potential Mitigations” discusses how large language models like the one underpinning ChatGPT might be used for disinformation campaigns. It was authored by Josh A. Goldstein, Girish Sastry, Micah Musser, Renee DiResta, Matthew Gentzel and Katerina Sedova, and is available in the arXiv repository.

If you have trouble sleeping, you’d better skip it, as it doesn’t make for reassuring reading. But, if you are curious, then it offers quite an interesting view into how this technology can make it so much easier, cheaper, and more effective to produce disinformation. Namely, the report highlights that (page 23):

- As generative models drive down the cost of generating propaganda, smaller disinformation actors will now be able to afford influence campaigns, meaning that we will see a larger number of actors and more diverse in nature;

- Propagandists-for-hire (think, Cambridge Analytica) can enter the space, as third-party suppliers of automated text for disinformation campaigns;

- The ability to easily and cheaply generate new text will expand the scale of disinformation campaigns;

- The ability to easily test text will make campaigns more effective;

- The ability to engage in extended back-and-forth conversations enables a new form of disinformation campaign: one where personalized chatbots “interact with targets one-on-one and attempt to persuade them of the campaign’s message” (page 25);

- Generative AI can result in the production of highly credible and persuasive content;

- The ability to generate text that is “semantically distinct (and) narratively aligned” (page 27) will allow actors to evade current bot detection systems

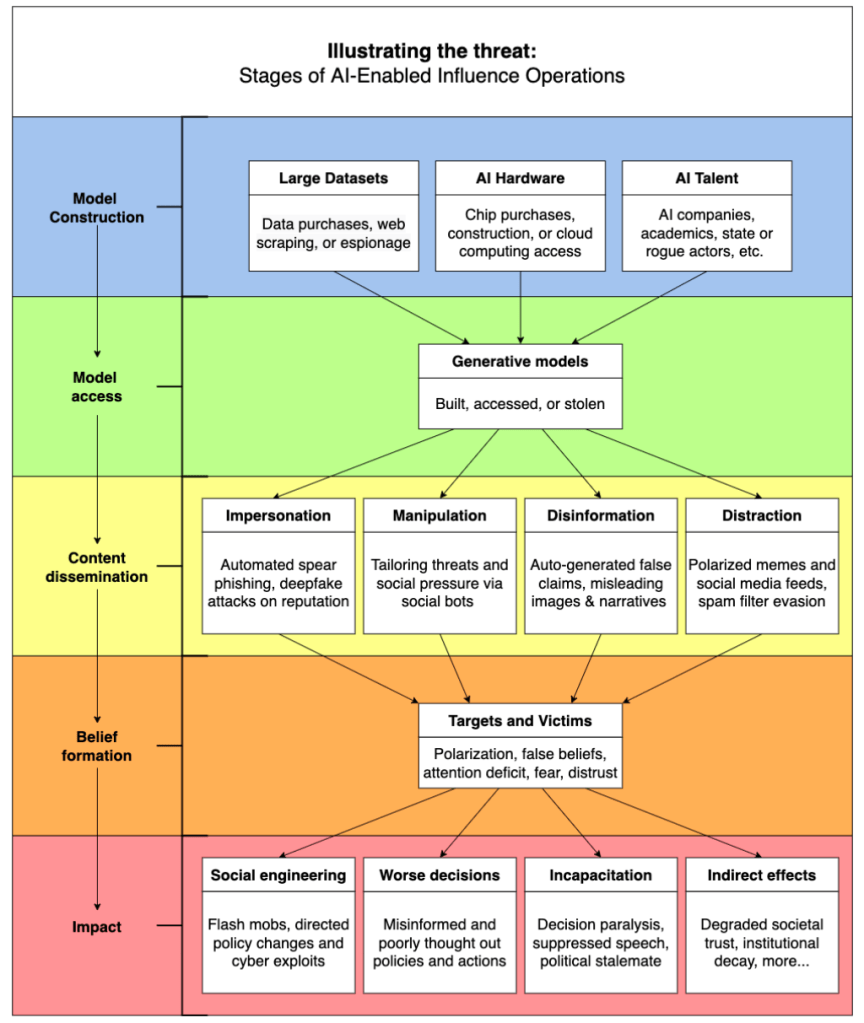

The authors go on to discuss various potential mitigating actions at the level of language models (as opposed to wider societal interventions such as education for digital literacy, for instance), for each of the stages of what they call “AI-enabled influence operations”:

I find it really interesting – and helpful – that the authors are looking at the value destruction potential of this technology. As Clear and I discuss in the paper “Artificial intelligence and machine learning as business tools: A framework for diagnosing value destruction potential”, too often the discourse around digital technology is focused on all the potential novel findings and the cost savings opportunities, while neglecting all the ways in which implementing the technology can negatively impact the organisation’s stakeholders. In particular, the promotional literature seems to ignore the reputational effects: both the halo effect of adopting a certain technology (as Keegan, Yen and I discuss in “Power negotiation on the tango dancefloor: The adoption of AI in B2B marketing”), and the reputational damage that occurs when things go wrong.

I was reminded of the whole issue of value destruction when I heard the 20th February 2023 episode of the podcast Reasons to be Cheerful. This episode mentions how Shell was being sued by a group of shareholders on the basis that, by neglecting to prepare for climate change and net zero goals, the company’s management was failing to ensure the long-term viability of the company and to protect the shareholders’ investment.

This left me wondering if Open AI and other companies developing generative AI software are calculating the reputational damage that they may suffer when their models are used for disinformation campaigns.

Maybe we should add a line in that Impact “Indirect effects” box for “value destruction for the firm” from the future scandals involving their products (like Facebook/ Cambridge Analytica or Uber’s self-driving car’s fatal accident), or higher insurance premiums, or shareholders’ legal action?

2 thoughts on “Assessing the risk of misuse of language models for disinformation campaigns”