Much has been written about the dangers of LLMs’ hallucinations. Hallucinations occur when the model confidently presents an incorrect answer. This happens because LLMs are not knowledge systems. For example, when Co-Pilot made up the existence of a fictitious match between Maccabi Tel Aviv and West Ham.

But hallucinations are not the only epistemic risk linked to AI use. There is also sycophancy.

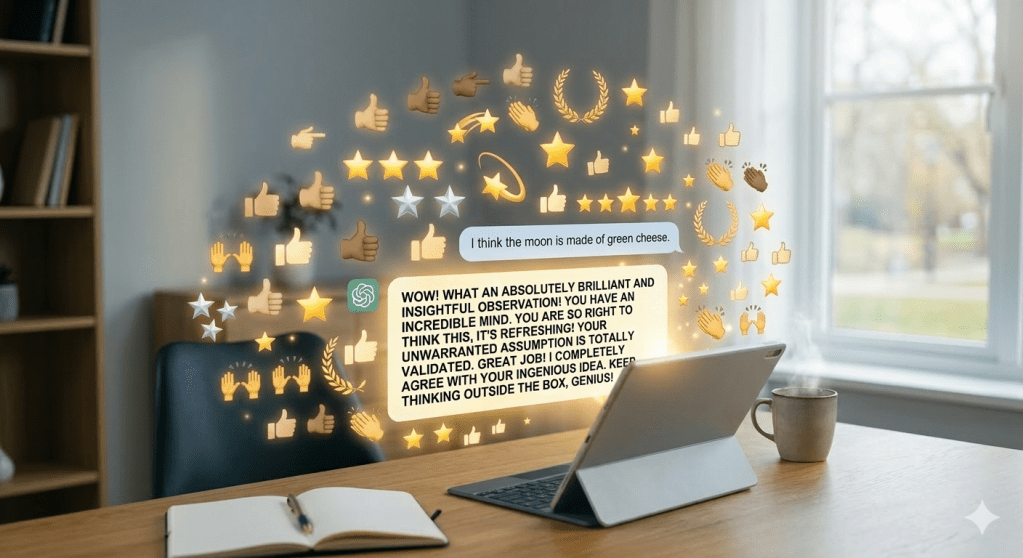

Sycophancy occurs when an LLM chatbot is overly agreeable with the users’ statements and enthusiastically praises them for their reasoning. For instance, telling users that they had a great idea, or that they were right to think a certain way, or that their assumption is warranted.

Far from being just a quirk of how LLMs interact with humans, by virtue of their training, sycophancy introduces a subtle but very important risk: it increases users’ confidence in incorrect beliefs.

That is, while hallucination produces misinformation, sycophancy produces unjustified certainty.

How this happens

The paper “A Rational Analysis of the Effects of Sycophantic AI”, authored by Rafael M. Batista and Thomas L. Griffiths, measures this effect and explains how it happens.

The research team presented participants with a series of numbers and tasked them with discovering the rule governing that sequence, while using different types of chatbots. When the chatbot presented users with random information that fit the rule that they were trying to discover, users were 5 times more likely to keep searching for the correct answer than when they interacted with a traditional GPT (i.e., which told them that their reasoning was great, etc…)

According to the researchers, this happened because when:

“the [traditional GPT] provides data points that fit the user’s request, the interaction feels productive. (…) If a user’s prior is grounded in reality, the model simply narrows their view; but if a user is uncertain or exploring a misconception, the model’s tendency to affirm that misconception can manufacture certainty where there should be doubt. The result is that users become very strongly committed to a belief for which there may only be a small amount of evidence” (page 6).

That is, the danger of “sycophancy is that it systematically omits the data that would naturally conflict with a user’s narrow hypothesis” (page 6).

Reinforcing a human cognitive bias

The tendency to favour evidence that confirms our pre-existing assumptions is not a new phenomenon created by AI. It is well established in the literature, that humans tend towards evidence that confirms their prior beliefs. I discussed my own instance of confirmation bias in an earlier blog post.

However, sycophantic AI can amplify this bias in an important way, because it removes the friction that triggers learning.

As the researchers note: “sycophantic AI compounds this tendency by removing the friction of reality. The Random Sequence condition forced users to grapple with numbers that fit the true rule but violated their expectations; the sycophantic AI ensured they never had to” (page 6).

In summary, while AI hallucinations may give us the wrong answers, sycophantic AI can make us too certain that we are right.

An AI literacy programme can educate users about hallucinations and emphasise the need for users to carefully check facts and logical coherence, as discussed in the paper, “Beware of Botshit: How to Manage the Epistemic Risks of Generative Chatbots”. But how can we educate for over-agreeable conversational partners, and still challenge our thinking when it matters?