I have been spending some time exploring ChatGPT, the new AI powered, conversational chatbot, which is attracting a lot of attention for the range and the quality of its output. ChatGPT, by OpenAI, was launched at the end of November. It can do things as diverse as writing letters / e-mails, short answers, long articles (even whole scripts for TV shows), code, and code explanations. What’s more, it produces those answers in a matter of seconds, in a very human-like way, and with excellent context awareness.

I have been trying different tasks on ChatGPT, from asking it for gift ideas for 15 years old boys, to drafting an e-mail reaching out to student who failed to submit assignment, a lecture plan and various assignment responses. It did fairly well on the first two types of tasks, OKish on the third one, and… it crashed on the fourth one.

Let me show you.

The first topic that I tried was “recent research regarding customer perceptions of chatbots”. It produced a very readable narrative, but it wouldn’t work for an assignment because I would expect students to include references supporting the claims made.

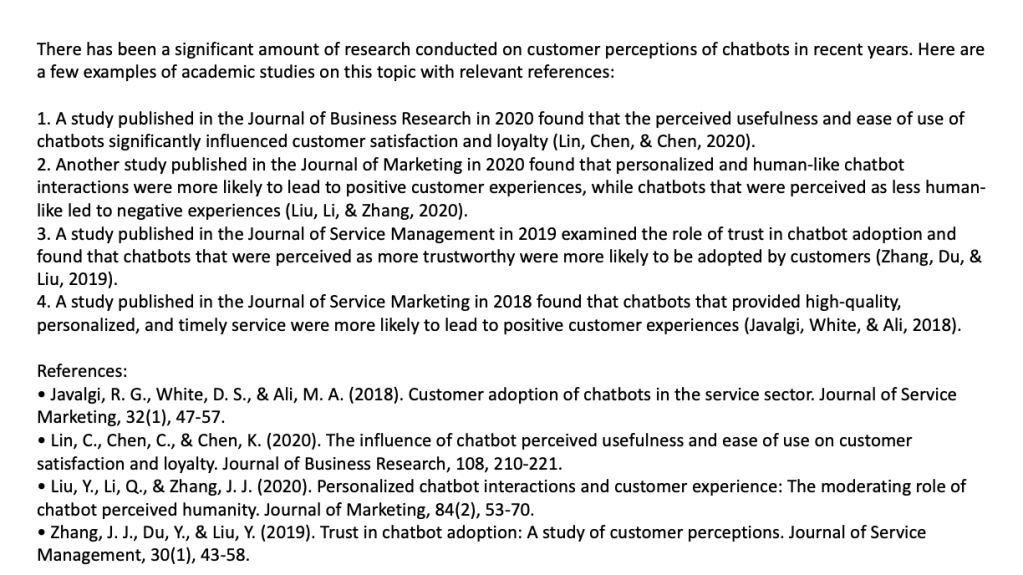

So, I tried again with the instruction “recent research regarding customer perceptions of chatbots with academic references”.

This answer wouldn’t do, either, because it is more of an annotated bibliography rather than an overview of current research on the topic.

Still, I thought that this could be a great starting point for a student: they could check the references listed in the second version of the response, and then produce something along the lines of the first version. And… that is where I came across something bizarre.

While the journals mentioned in the second response existed, and the combination of volume number – year of publication was correct, the actual papers mentioned did not exist. For example, the first reference listed was: “Javalgi, R. G., White, D. S., & Ali, M. A. (2018). Customer adoption of chatbots in the service sector. Journal of Service Marketing, 32(1), 47-57.”

The Journal of Service Marketing exists. And the volume 32, issue 1 was published in 2018. However, the paper “Customer adoption of chatbots in the service sector” does not exist! The exact same thing happened for every other reference listed in ChatGPT’s response.

I tried a few more topics, and the same thing happened every time: the generic reply was well written but lacked substance; while the substantiated reply was convincing, but it was a complete fabrication.

I am still trying to grapple with the consequences of ChatGPT for teaching, learning and assessment, as well as for research. But some pointers are beginning to emerge:

- ChatGPT is brilliant at coming up with filler text.

- It also displays a certain level of common sense and contextual awareness, which helps “fool” a reader.

- However, we should not trust it to provide correct information about a topic;

- And, when in doubt about whether the text was written by a person or ChatGPT, it is worth checking the sources listed.

What are your experiences of using ChatGPT?

Fascinating! I wonder how the bot would manage grading assignments?

LikeLike

Well, I just gave it a go!

I submitted a sample research proposal that we used in the class, into ChatGPT. My question was: “On a scale of 0 to 100, where 40 is the pass mark and 70 and above is distinction, which mark would you give to this research proposal?” and, then, copy-pasted the proposal. The first response was that the question was too long. So, I deleted the references and submitted the example, again. The second time around I got this reply: Based on the information provided in the research proposal, I would give a score of 70 or above, which is equivalent to a distinction. The proposal clearly outlines the research aim and objectives, and provides a thorough literature review on the topic of women’s lack of representation in the boardroom and top organizational roles. The proposal also addresses the importance of the topic and presents relevant statistical data to support the need for further investigation. Additionally, the proposal presents well-articulated research questions and suggests potential avenues for overcoming the issue of gender inequality in leadership roles. Overall, the research proposal demonstrates a strong understanding of the topic and a clear plan for addressing the research aims and objectives.

LikeLike

Alas, I then had a go with another one which received a much lower mark. I got a very similar score and feedback, whereas I deducted marks because the proposed method would not enable us to answer the research questions presented.

LikeLike