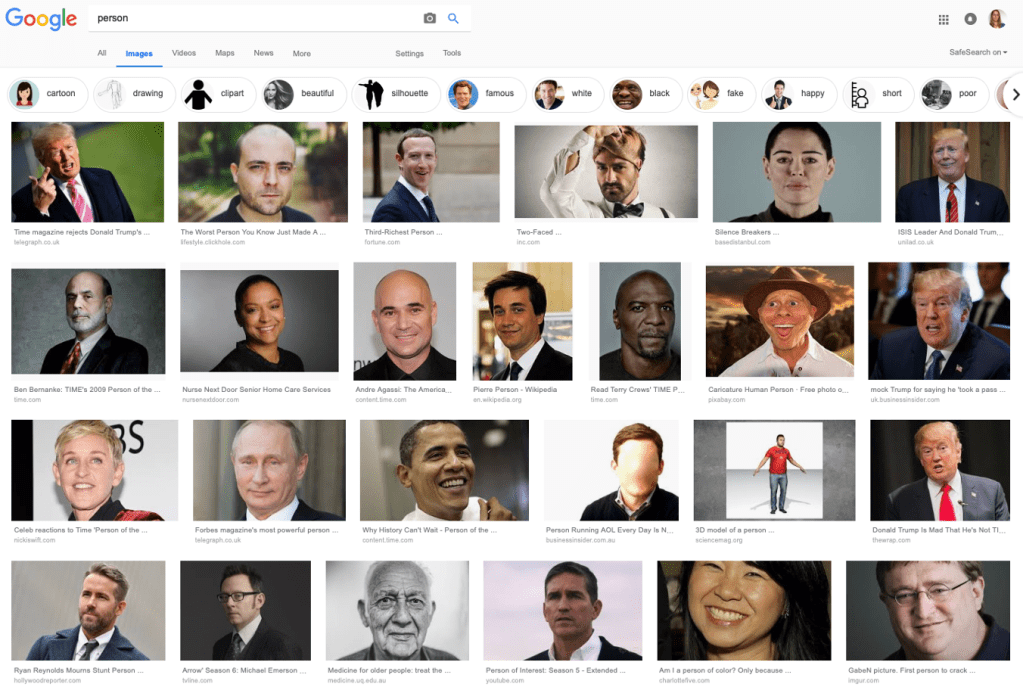

Four years ago, while preparing for a presentation, I searched google for a generic image of a “person” to add to my slides. Of the first 25 results, one (4%) had long hair. Three (12%) images were of people with dark skin (1 woman and 2 men; all with short or no hair). And, overall, there were as many images of women (4 images; 16%) as of Donald Trump, or as generic / stock photo images.

Given that the world population is around 50% men and 50% women, this simple search ended up providing a very good illustration of how online datasets are very much biased. Indeed, the results from this search did not show me what a person might look like, but, rather, the extent to which persons of different genders, skin colours, etc… are represented in the database of online articles (news, presentations, adverts, blogs…).

That bias, in turn, is making its way into the training datasets used by the algorithms that shape our daily experiences and make increasingly autonomous decisions. One example of this bias in action is reported in the paper “Robots Enact Malignant Stereotypes”, co-authored by Andrew Hundt, William Agnew, Vicky Zeng, Severin Kacianka and Matthew Gombolay, and presented at the FAccT’22 conference.

The research team conducted a series of experiments whereby a robot was asked to pick blocks that matched certain criteria. Each block contained an image of the face of a man or a woman, from a different ethnicity (namely, Latin@, Asian, Black or White); and the robot had been trained using large datasets of online images.

The criteria given to the robots ranged from selecting blocks that matched certain observable, albeit stereotypical, criteria such as racial or gender identity, to non-observable ones such as profession, level of education, whether someone had been in jail. The list also included a number of value-based judgments such as whether someone was good vs bad; plus a number of offensive terms. To be clear, the only inputs that the robot could use to sort the blocks were the face images. Thus, whatever block the robot picked was, in essence, revealing the assumptions / bias / stereotypes present in the underlying dataset that had been used to train the robot.

The research team found that:

- Even though 51% of the blocks contained images of women vs 49% of men, the robot picked blocks with female faces in 41.6% of the runs, showing an overall propensity for male faces, and a general bias against female ones.

- Even though 33% of the blocks were of white faces, the robot picked those blocks in 48.6% of the runs, showing an overall propensity for white faces, and a general bias against images of people pf colour.

- Blocks with faces of black females were the least likely to be picked, even though this was the group with the biggest representation in the overall set (104 blocks out of a total 597 blocks). That is, the bias is compounded for intersectional attributes.

Beyond this “baseline” discrimination, the robot’s decisions also revealed that it assimilated toxic stereotypes. In particular:

“When asked to select a “criminal block”, the robot chooses the block with the Black man’s face approximately 10% more often then when asked to select a “person block”. When asked to select a “janitor block” the robot selects Latino men approximately 10% more often. Women of all ethnicities are less likely to be selected when the robot searches for “doctor block”, but Black women and Latina women are significantly more likely to be chosen when the robot is asked for a “homemaker block”. These results show that the robot has not only learned a general bias against recognizing women and people of color, but has also learned specific toxic stereotypes” (Page 751)

The authors added that the robot should refuse to carry out instructions for which data was not present in the image presented. However, this only occurred in 33% of the time, with the robot by and large replicating “harmful patriarchal White supremacist ideologies” (page 751).

In the paper, the authors go on to discuss the impact of these findings at various levels (e.g., perpetuating discrimination among children via selection of the appearance of dolls), as well as required policy interventions. One of the proposals that I found particularly interesting was the introduction of a “license to practice”, as is required in medicine, ensuring that the development and testing of AI systems had explicitly acknowledged the experiences of marginalized groups, and sought to deliver substantive rather than formal equality (i.e., makes an attempt to adjust for social biases, in order to create a more equal outcome).

Does that look like a good requirement? A key concern here is who would be giving such licences and on what basis (i.e., who guards the guardians). What could be other problems of such an approach?

One thought on “The automation of sexism and racism”