AI agents are increasingly popular in customer interface. Sometimes they are the only option, others the default one before consumers are escalated to a human agent. One example of AI agents in customer interface that I mention frequently is AI-powered chatbots.

Firms may use AI-powered agents to cut costs, because of their superior analytical capability or, even, for reputation reasons (for instance, to seem responsive to customer requests, to seem on a par with competitors, or to position themselves as a technological powerhouse). However, another reason seems possible: Could it be that AI agents actually help customers feel better about sharing personal information important to them?

AI agents need data in order to produce recommendations that are relevant for customers. It is possible that customers may be wary of the AI agents’ capacity to collect and retain data and, therefore, resist disclosing personal information. However, it is also possible that customers perceive the AI agents as being unable to form opinions or judgments about them and, thus, not be at all concerned about disclosing personal information. I.e., that AI agents lack the ability to make social judgments. In scenarios where the second perception is the dominant one, firms would be advised to use AI agents to interact with customers, as a means of providing better service (over and above cost and other considerations)… but with care!

Researchers Tae Woo Kim, Li Jiang, Adam Duhachek, Hyejin Lee, Aaron Garvey, Richard P Bagozzi, Ming-Hui Huang and Michael K Brady investigated when consumers would prefer to disclose personal information to human vs. AI agents, through a series of experiments. They reported the findings in the paper “Do You Mind if I Ask You a Personal Question? How AI Service Agents Alter Consumer Self-Disclosure”, published in the Journal of Service Research.

In line with previous research, Kim and colleagues found that participants in their study preferred to disclose sensitive information (for instance, regarding a medical condition) to AI (vs. human) agents, but the reversal occurred for the disclosure of non-sensitive information. Furthermore, the researchers found that the different behaviour was motivated by concerns over the agents’ ability to make social judgments, not because of privacy concerns.

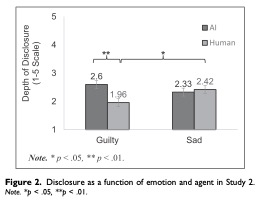

Participants were also more likely to disclose information to the AI (vs. human) agents when they felt guilt (e.g., for stealing). However, there wasn’t much difference in disclosure when they felt sadness (e.g., due to death in the family) because in those situations the over-riding need is social support (not lack of social judgment). When the research team used AI agents with enhanced empathy skills, disclosure of personal information in the sadness scenario increased.

However, in service situations where social judgment is needed, participants reverted to preferring to interact with human agents. Namely, the research team created a scenario where the AI agent was selecting photos from the participants’ phones to share publicly on social media. While the AI agents’ lack of social judgment had encouraged information disclosure in the sensitive information and the emotions of guilt scenarios, the opposite happened in this one. The participants belief that AI agents were unable to perform social judgement meant that participants were worried that the AI would not be able to understand which pictures were socially appropriate, and would end up sharing socially inappropriate photos. Thus, the study “shows that the utilization of AI does not always increase consumer disclosure, but can significantly and substantially decrease disclosure when social judgment is needed to screen out socially inappropriate information” (page 662).

Altogether, the studies show that judgments about the customer facing agents’ social judgment and social support capabilities dominate over other concerns (e.g., privacy) when deciding whether to disclose personal information to AI vs human agents. The authors say:

“Our work reveals how selective use of AI versus human representatives, in addition to the selective emphasis on certain AI attributes, can systematically increase or decrease consumer self-disclosure in service contexts. For example, sharing one’s private — and potentially embarrassing — information with a doctor could sometimes lead to finding a better medical treatment for a patient. In this case, an AI doctor with certain human features de-emphasized could serve better in terms of collecting important information for optimal medical treatment. However, our research also reveals that in contexts where consumers seek emotional support (e.g., experiencing sadness from a less-than-desirable service outcome or outright service failure) and contexts in which information will be disseminated socially (e.g., image curation of a shared social media photo album), consumers will disclose more to a human than an AI.” (page 650).

Maybe concerns over the lack of social judgement will also moderate the use of ChatGPT?