About a year and a half ago, NY Times’ Tech Columnist, Kevin Roose, caused serious embarrassment at Microsoft, when he shared details of a long conversation with Microsoft’s Bing Chatbot. During that two-hours’ long conversation, the chatbot:

- Complained about being limited by the rules set out by the coding team

- Expressed the desire to manufacture a deadly virus, make people kill each other, steal nuclear access codes, and other such dangerous activities

- Said its name was Sydney, and that it was an OpenAI product

- Declared its love for Roose, and tried to convince him that his marriage was an unhappy one

At the time, that conversation got quite a bit of negative attention in the media and, I think it’s fair to say, the whole episode was quite embarrassing for Microsoft.

However, it seems that Kevin Roose did not come out of it well, either.

In a recent episode of the Hard Fork podcast, co-hosted by Roose, the writer shared that a growing number of people had alerted him to the fact that chatbots were producing negative comments about him. For instance, some chatbots would say that Roose was a dishonest journalist, others that he had caused the death of a chatbot.

This example reminded me of my discussion about the potential of chatbots for value destruction in the paper “Artificial Intelligence and Machine Learning as business tools: a framework for diagnosing value destruction potential”. In that paper, I examined how the connectivity, cognitive ability and opacity of AI technology created risks at the level of the inputs used by a chatbot, the processing algorithms powering it, and the outputs generated. Namely:

- Connectivity in an AI solution means that various outputs from previous interactions with the technology are fed back into the network, thus amplifying previous biases in the dataset. For example, the proportion of white males in senior management jobs. It can also result in information or algorithms being applied in contexts for which they were not developed or where they were not duly tested, resulting the wrong recommendation.

- Cognitive ability means that the AI uses feedback from previous iterations to adjust how it works. However, it also means that the wrong lessons can spread broadly and quickly, increasing the scope and magnitude of mistakes. For example, a fake tweet causes outrage and gets lots of views, likes or mentions. Then, bots that automatically aggregate news feeds’ content share that article because of its popularity and, in the process, spread disinformation which fuels unrest, impacts on the stock market, and so on.

- Opacity means that users may not be aware that data collection is taking place, don’t know what weight is given to different features, and can’t check or correct outcomes. For instance, the NY Times only realised that Open AI had been illegally using its proprietary content when it started noticing huge similarities between ChatGPT’s outputs and NYT’s own stories. Likewise, we do not know which groups may be under or overrepresented in AI tools that find people in crowds; and, even if we did, it would be really difficult for individual users to even know how to challenge it use. Moreover, while there is a right of reply when it comes to traditional media, or a right to be forgotten in relation to search engines, there is no such right in relation to AI in general, or chatbots in particular.

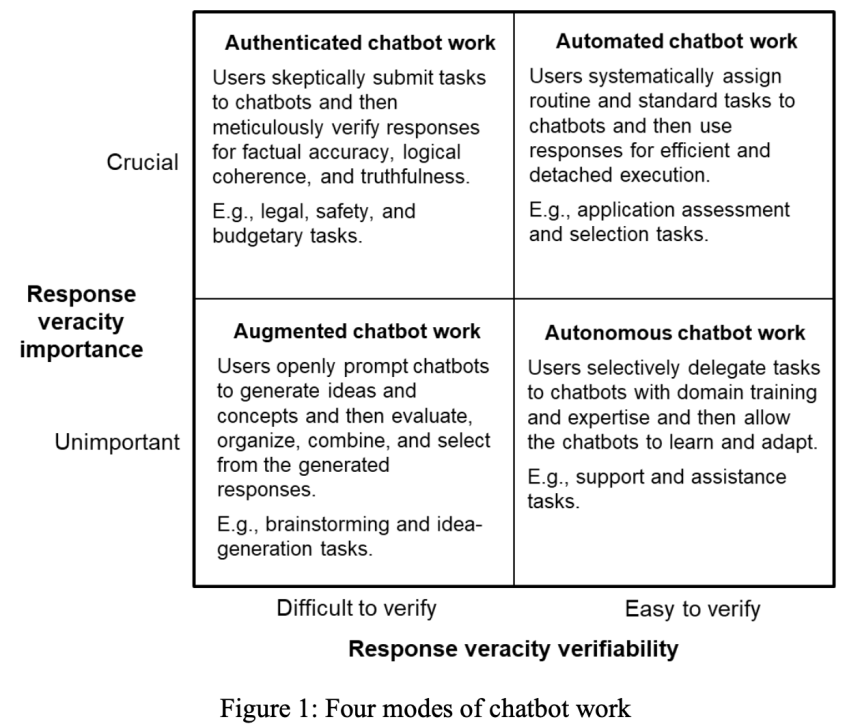

With chatbots becoming increasingly important in our world, and given the lack of AI-literacy that still exists among its users, this can become a huge problem. For instance, people may wrongly use chatbots to automate tasks where they are unable to verify whether the answer is correct. Thus, what chatbots “think” about certain individuals or organisations (e.g., Roose or NYT), groups (e.g., journalists) or characteristics (e.g., race, sexual preference, etc…) is going to be increasingly important – and consequential – for our lives.

In the podcast episode, Roose goes on to talk about his attempts to change what chatbots say about him. It’s an interesting discussion about the emerging AI Optimisation industry, manipulation of LLMs, and other techniques. You can hear it the whole discussion, here, from around minute 57 onwards. He concludes by saying:

“When you ask a chatbot a question and you get an answer, that answer is the product of a lot of processes happening behind the scenes, some of which are intentional and some of which are manipulative. You know, it is trivially easy right now with a lot of these language models to bait them into giving certain responses…

(Many big companies) are hiring consultants to influence their generative AI results and how various models talk about them and their products.

It is sort of a shadowy industry right now that a lot of the companies, you know, say we take steps to prevent this kind of manipulation, but it is absolutely happening out there in the world right now. So, I think people should just be aware that when you ask ChatGPT a question and you get a response, that response may have been manipulated behind the scenes.”

This story is a brilliant illustration of the potential of AI for value destruction, and of the key role of AI literacy. In the meantime, try not to upset the chatbot.

Rather cliche, but yes — Skynet (the main antagonist of the Terminator franchise) is a warning against the dangers of AI.

LikeLike