In the paper “Artificial Intelligence and Machine Learning as business tools: factors influencing value creation and value destruction”, Fintan Clear and I argue that the often-repeated claim that AI solutions are “cheaper, faster, and less prone to mistakes than humans” reveals a narrow assessment of the costs associated with deploying an AI solution in an organisational context. Such an assessment tends to consider only the direct and short term costs of deploying the technology, and ignore costs such as:

- the potential for reputational damage

- trade-offs (e.g., calculation speed versus confidence; or accuracy versus interpretability of the algorithm)

- acquiring the necessary analytical capabilities and big-data-handling skills

And, now, evidence is emerging about an additional cost: customer misbehaviour.

TaeWoo Kim and his colleagues Hyejin Lee, Michelle Yoosun Kim, SunAh Kim and Adam Duhachek, found, in a series of experiments, that customers are more likely to engage in dishonest behaviour (e.g., lying or committing fraud), when interacting with an AI than when interacting with a human customer service agent. They detail the experiments in the paper entitled “AI increases unethical consumer behavior due to reduced anticipatory guilt”.

Customer dishonesty is problematic for organisations for three reasons. First, because of the direct costs resulting from the behaviour itself (e.g., fraudulent claims). Second, because customer misbehaviour is contagious: evidence of misbehaviour by previous customers increases the likelihood of misbehaviour by subsequent customers. And, third, because customer misbehaviour impacts negatively on staff morale.

In our paper about AI and value destruction, Clear and I argue that, to identify the value destruction potential of AI technology, before it is deployed, managers need to:

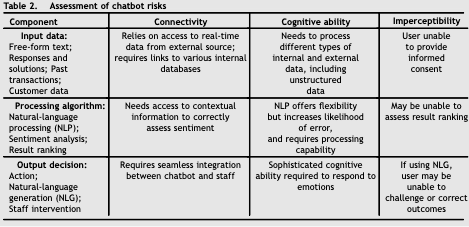

- Map the components of the whole system: the data inputs, the algorithms that process the data, and the outputs.

- Then, analyse how AI’s connectivity, cognitive ability and imperceptibility create specific risks. For instance, connectivity means that data inputs may be corrupted, incomplete, or misleading; that processing algorithms may be chosen because of the need for compatibility rather than its performance; and that poor quality outputs spread broadly and quickly, increasing the scope and likelihood of mistakes.

Further, we exemplify these principles in relation to the use of AI-powered chatbots to handle customer complaints. We identify the following risks (and, thus, potential costs):

Have you come across any other interesting studies regarding the costs associated with the deployment of chatbots or other AI technology?