Apparently, autonomous robotic vacuum cleaners (i.e., Roombas) and dog poos don’t mix well. I had no idea as I have neither a Roomba nor a dog; but I have, now, learned that this is a common problem faced by pet owners, as reported in this 2016 article in The Guardian.

Maybe I should use the past tense in that sentence, though, because iRobot, the company behind Roomba, has upgraded its vacuums with artificial intelligence (AI) which detects – and avoids! – dog mess. AI to the rescue. No more “poopocalypse” stories.

Vacuum cleaners are not the only household product to get the AI treatment. Video streaming (e.g., Netflix), music streaming (e.g., Spotify), voice assistants / smart speakers (e.g., Alexa), and home security systems equipped with facial recognition capabilities come to mind. But many others are already here, or in the pipeline.

What are the consequences of embedding AI in household, for consumers?

This is one of the questions examined in the report “Study on the Impact of Artificial Intelligence on Product Safety”, prepared by the Centre for Strategy and Evaluation Services (CSES) for the Office for Product Safety and Standards (OPSS).

Before we look into some of the report’s conclusions, it is important to clarify that, even though the report uses the term AI, the discussion is really about machine learning, only (it’s a bit like people using the term “marketing” to refer to “advertising”, which is only one very small part of marketing)*. Moreover, as the authors go to great length to note, many of the products in the market which are described as “smart” or “AI enabled” are, really, only internet of things products, and there is no intelligent – or, indeed, learning – element in the product itself**.

The focus of the analysis in the CSES report is product safety, specifically***. Though, to be fair, the authors do look at product safety in broad terms, considering both direct and indirect effects. Here is an overview of the main issues identified in the report, followed by my thoughts.

Product safety – Benefits and opportunities

The authors posit that the sensors and analytical capabilities of AI-enabled products can enhance product safety directly via:

- Identification of unsafe product usage – e.g., headphones playing too loudly, such that they will damage the wearer’s hearing, or prevent the wearer from hearing an approaching vehicle.

- Optimisation of product performance – e.g., fridges that are overloaded or have limited airflow

- Predictive maintenance – i.e., enabling the manufacturer to monitor when the equipment needs to be repair, thus preventing product failure or accidents.

Safety can also be improved indirectly through:

- Improving the product design

- Detecting rare-events and potential problems before the products leave the assembly line, thus avoiding mass product recalls

- Sourcing alternative suppliers, in the case of disruptions to the supply chain

- Reducing production errors, via delivery of targeted instructions during product assembly

- Monitoring product quality throughout the supply chain and the product’s life cycle.

- Customising the product to the user’s characteristics (e.g., weight, height, disabilities…)

- Detecting, analysing and preventing cyber-attacks, which can target the critical infrastructure

Product safety – Challenges and risks

The authors also identify a number of challenges created by the use of AI in household products:

- Robustness and predictability – When the product fails, or struggles, to adapt to an environment that is different from the one used to train the algorithm or test its algorithm’s performance. An example (not from an household product) was Tesla’s failure to react to a pedestrian detected crossing the road outside of a zebra crossing.

- Transparency – Refers to the lack of awareness that AI is being used, as illustrated in Google’s demo of the Duplex product.

- Explainability – Relates to the lack of ability to explain how a certain decision (e.g., movie recommendation) was reached – and, thus, to challenge it – because of the opacity of algorithms.

- Security – The use of unencrypted communications between the devices and backend servers enables unauthenticated access to sensitive data as well as hacking of those devices.

- Resilience – As these devices usually require internet connectivity in order to work properly, the loss of connectivity could result in product failures. The example given in the report is that of a connected fire alarm which would stop working when losing internet access and, thus, fail to alert residents that a fire has started somewhere in the house.

- Fairness and discrimination – Resulting from the over vs under representation of certain demographic groups in the database. For instance, the failure to recognise certain accents, or adapt for individuals with speech impairments. This could also be caused by the biased assumptions informing the design of the algorithm. Do you remember my “national anthem” episode? This could impact both the current use of the product, as well as its future development, as, presumably, usage data becomes the basis of future product development.

- Privacy and data protection – Refers to the depth and breadth of insight that may be derived from analysing ostensibly non-sensitive data. An example that comes to mind was when a Fitbit customer support assistant recognised that a client was pregnant before she was even aware of that, because the device was picking up two heartbeats.

My thoughts

I find this list of pros and cons quite good, and I will certainly be using it in my classes and consulting to guide the analysis of the consequences of using AI. However, in my view, there are three additional risks that need to be considered.

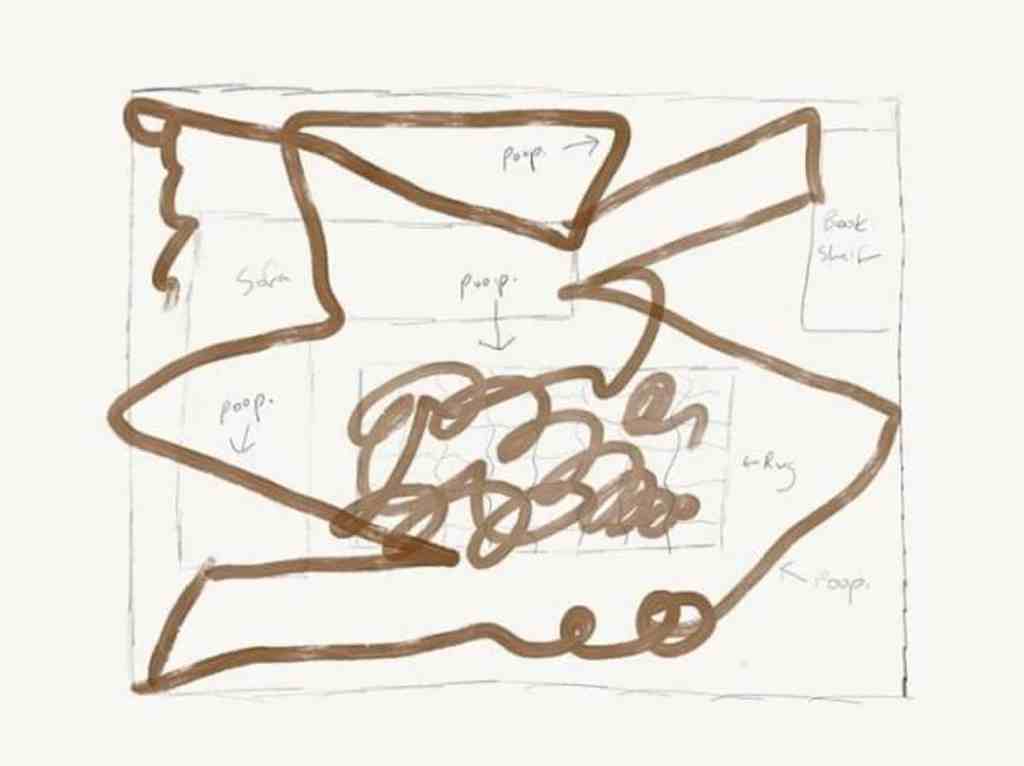

The first one is the definition of goals. Machine learning algorithms optimise for the goal that they are set, in a very literal fashion. For instance, if the goal of the algorithm in a game console is to not lose the game, it may pause it indefinitely which undermines its value as a source of entertainment. Or, as discussed at great length in the documentary “The Social Dilemma”, if the goal of a content streaming algorithm is to increase engagement with the platform, it will end up showing more and more extreme content, which is detrimental for both the individual watching that content and for society as a whole. Or, in the “poopocalypse”, the focus on cleaning a particular spot led it to keep going back and forth over the mess, actually spreading the problem.

The second one is the lack of diversity in the teams designing, testing and implementing AI solutions. This goes beyond the issue of biased databases because it influences the questions being asked of the dataset, or the use-cases being considered. For instance, because I don’t have a pet running around the house, it would never occur to me to wonder what might happen if said pet had an “accident” at home, and my helpful Roomba came across the resulting “poop”.

And, finally, there is the issue of over-estimating AI’s abilities. Going back to the “poopocalypse” case, it looks like the power was not detected by AI’s superior analytical powers in crunching all the data that the robots were collecting. Instead, the problem surfaced because users started complaining about the problem – some directly to the manufacturer, others to on social media. Thus, if manufacturers, product designers, regulators, etc… rely solely on AI to detected and report problems, and reduce investment in other feedback channels, this can actually reduce product safety for users. As Janelle Shane hilariously demonstrates in her book “You look like a thing and I love you”, the problem with AI is not that it is super intelligent, but that it is depicted and treated as thus, when it is actually very, very dumb.

*See part 2 of the report

**See part 3 of the report

***See part 4 of the report

I do have a robot vacuum cleaner (but no dog). The manufacturer claims the machine uses AI to map out each room in order to clean it more efficiently. Watching it in action and studying the map of where it’s been each night, I doubt it. Instead it seems to have been given Genuine People Personality (to Sirius Cybernetics Corporation). It likes to hide under beds and to try to find its way into rooms which are closed off.

LikeLike