Chatbots are computer programmes designed to conduct conversations with humans about specific topics, through text, voice or touch. Because they can run 24/7, chatbots are becoming increasingly popular in situations where there are frequently asked questions which can be resolved from a limited pool of answers. Examples include accepting an order, updating the status of an order, providing information about opening hours or contact details or confirming price options.

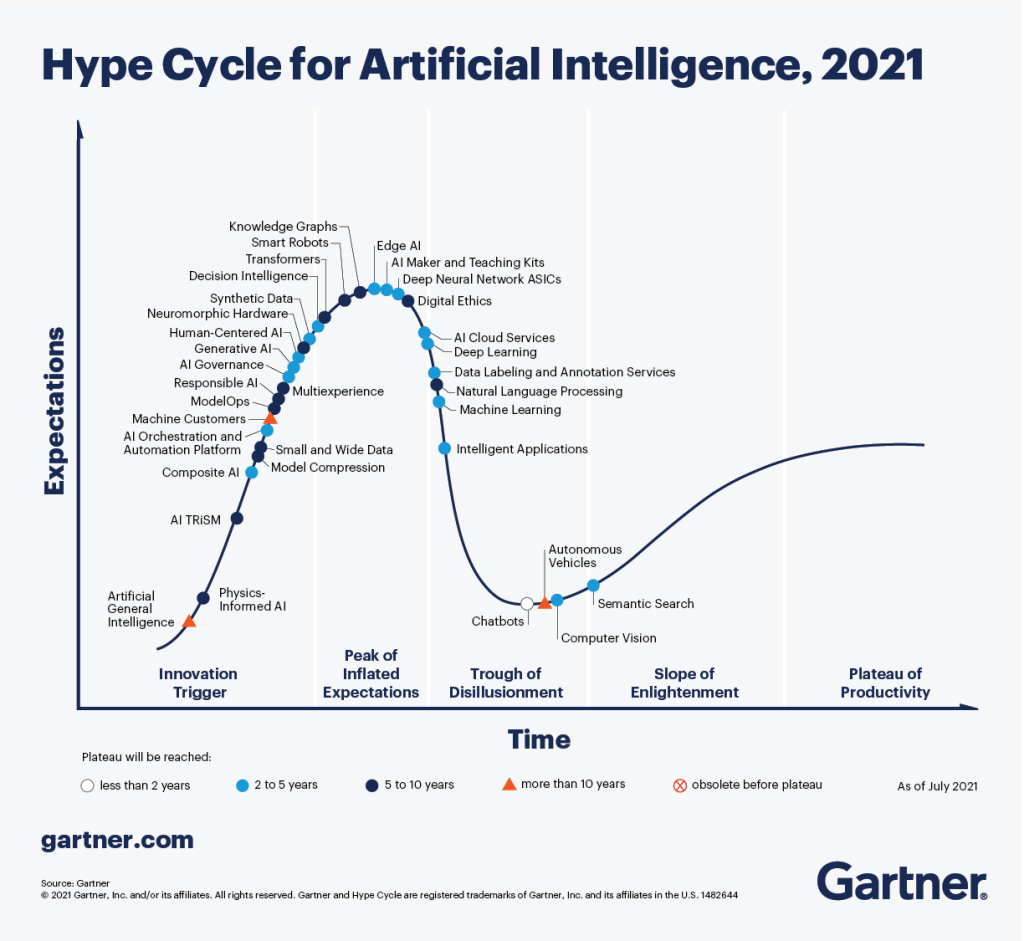

In principle, chatbots are quite simple to design. First, we create a database of predefined, common questions with associated answers (called dialogues). Then, create an interface for the user to enter a question (the query). Finally, use Artificial Intelligence* to match the user’s query with one of the pre-defined questions in the dialogues’ database, and present the corresponding answer to the user. Yet, despite its promise and apparent simplicity, this technology is failing to live up to expectations, as symbolised in Gartner’s classification of chatbots as part of the “Trough of Disillusionment” of AI technologies in 2021.

I came across a paper that detailed the development of one such AI-powered chatbots. This type of papers, offering an “under the hood” view of technology development, are rare. Thus, this paper provides an interesting insight into the process of developing this type of chatbot, and the challenges encountered by the team.

The paper is entitled “Ask Rosa – The making of a digital genetic conversation tool, a chatbot, about hereditary breast and ovarian cancer”, and was published in the “Patient Education and Counseling” journal (open access). It was authored by a team of 10 researchers based in Norway: Elen Siglen, Hildegunn Høberg Vetti, Aslaug Beathe Forberg Lunde, Thomas Akselberg Hatlebrekke, Nina Strømsvik, Anniken Hamang, Sigrid Tronsli Hovland, Jill Walker Rettberg, Vidar M. Steen and Cathrine Bjorvatn.

About the chatbot

The chatbot was developed to answer frequently asked questions about breast and ovarian cancers, such as the process of testing for genetic variations, or the consequences of carrying specific genetic variants (e.g., the risk of developing cancer). In the paper, the authors describe the technology used, as well as the process for developing the dialogues that underpin the chatbot.

In the first iteration, they developed 500 such dialogues. The pilot tests revealed that out of the 822 questions asked by the testers, the AI was unable to match 303 (37%) to any of the pre-developed dialogues. Of the remaining 519 questions asked by the testers, 169 (33% of 519; 20% of 822) received wrong answers, and only 350 (67% of 519; 43% of 822) received a correct answer. That is, less than half of all questions asked by users received the answer that they were meant to receive.

| % of total Qs | % of questions matching dialogue database | ||

| Total questions | 822 | ||

| Question does not match dialogue database => Fall back answer | 303 | 37% | |

| Question matches dialogue database => Answer provided | 519 | 63% | |

| Answer provided is wrong | 169 | 21% | 33% |

| Answer provided is correct | 350 | 43% | 67% |

The team proceeded through 3 more iterations of the app, each time manually analysing the questions submitted and the associated outcomes to try and understand the reason for the fallout or wrong answers, as well as interviewing the testers about their experience of using the chatbot.

They identified the following technology and user related challenges.

Technology-related challenges

- Distinguishing between questions with similar wording but referring to very different issues and, thus, requiring different answers.

For example, the question “What is the risk of ovarian cancer with BRCA1 mutation?” vs “What is the risk of ovarian cancer with BRCA2 mutation?”. Even though there is only one digit difference between the two questions, they refer to distinct genetic variants with very different risks and, thus, should be directed to different sets of dialogues.

The authors explained that “To make the chatbot able to differentiate questions at this level, the threshold for similarity of the wording would need to be almost 100%. However, this threshold will disable the AI function and leave the chatbot with providing only answers that match perfectly, leading to a high fraction of fallback answers.” (page 14).

To solve the problem, they merged the predefined answers for both options, creating a joint one that reported the risks for each variant. I.e., people asking the first type of question would get the same answer as people asking the second one, reporting both risks. While this solves the technical problem, it does mean that users are getting less specific answers to their question. This solution also requires the involvement of highly qualified personnel to decide when it is OK to proceed with specific vs generic answers, based on the risk for the users, resulting from incorrect answers.

- Achieving a balance between generic vs. no answer

When there isn’t a direct correspondence between the question asked by the user and one of the dialogues, the AI has two options: either provide a fallback answer asking the user to try again using different words, or look for specific keywords, and then provide a generic answer related to the keyword.

However, the users were really frustrated with the volume of generic answers provided for certain keywords (namely, the Norwegian words for breast cancer and for ovarian cancer). They preferred receiving the fallback answer prompting them to try again, rather than a generic – but, in their view, useless – answer.

To solve the problem, in the short term the team reviewed all the keyword used, and deleted the two mentioned above, among others. Later, they developed additional predefined questions related to these themes. The authors say that “each dialogue needs at least 20 related predefined questions for the embedded AI to work optimally” (page 13).

User-related challenges

- Different styles of asking questions

The team “observed that some users tended to write long and explanatory questions. When asked about this observation they said this was to ensure that the chatbot understood their question correctly. Others chose to write questions in a keyword format or SMS-like style, similar to googling. When asked about this approach, they said they wanted to see what predefined answers that existed in the chatbot about particular topics” (page 12).

The authors write that that “As chatbot technology is something most people are unfamiliar with, it is only natural to approach it the way you would approach either a human conversation, or a google search. However, as a chatbot provides the one most fitting answer to the user-provided question, this strategy will increase the fallback rate”. (page 15)

This something that my doctoral student, Daniela Castillo, found, as well, as discussed here.

- Wanting to understand the chatbot’s workings

The authors reported that the testers “were frustrated by not knowing what information was available in Rosa and how to ask the best questions in order to extract this information” (page 11).

This reflects something that I found in research in other settings (namely, personalised recommendations – paper currently under development): people want to understand why they got a particular result, so that they can use the system better / to get more relevant results.

In the case of ROSA, the team added a FAQ page, for new users. However, there is a limit to how much researchers can explain about the workings of the chatbot, because often they don’t know it, themselves.

- Wanting easy access to a human

When asked about desired usability features, the testers wanted to be able to easily reach a genetic counsellor. This desire for the ability to transfer to a human counterpart was very much present in Daniela’s research, too. This shows that, while chatbots may be a very useful first port of contact (if they work!), they are still very much seen as an inferior option to engaging with a member of staff, and a gateway (or hurdle) to achieve that. Daniela also found that users wanted the seamless transfer of information between the two platforms, such that they did not have to start from scratch.

The result

The paper describes the four iterations of the app. In each one, the team added new dialogues to the database. At the time of writing their paper, the team had created 2,256 dialogues, representing an increase of 351% in the number of dialogues in the database. Their final fallback rate was 13%, but work continues on the app.

The increase in number of dialogues corresponds to two developments:

- Increasing the number of possible answers, from 67 in the first iteration to 144 in the fourth one, to address a wider range of issues worrying the patients.

- Increasing the number of possible questions leading to the same answer, from 7.5 in in the first iteration to 15.7 in the fourth one, to allow for more ways of asking questions about a specific topic.

They also embedded “a menu providing information about privacy, a presentation of Rosa and the research team, brief general information about hereditary breast and ovarian cancer, and a FAQ page” (page 12).

It was a long process (two years since the start until completion of the pilot version). They recommend adopting a participatory design for chatbot development, like they did, but note that “With every iteration, there is a large amount of rebuilding work. High involvement by both end users and health care personnel throughout the process is vital, making validation of the predefined chatbot-provided answers by experts a crucial part of the process” (page 15).

The authors conclude with the following assessment and recommendation: “The beauty of a chatbot is in its simplicity. This simplicity is also a chatbot’s main threat. The manual labour required to build a robust chatbot is still substantial. A chatbot does not have to provide a perfect human-like conversation; in fact, it should not be mistaken for a human, it should be valued for what it is, a support tool at your service providing accurate information in lay language… (A)rtificial intelligence used in health care must pass the implementation game rather than the imitation game.” (page 16)

I found this insider look at the development of a chatbot really interesting. Did you come across other such descriptions?

*Not all chatbots use artificial intelligence. Here, I am focusing on AI-powered chatbots, only.

One thought on “Tales of developing an AI-powered chatbot”